Role of Data Scientist in Machine Learning project

What is the role of data scientist in a Machine Learning project? What all different steps or stages of work do a data scientist needs to perform for a successful ML project?

When assigned to project, what will be the role of a data scientist? Let us deep dive into different aspects that a data scientist is expected to do in the process. This post will be very helpful for budding and aspiring Data Scientist. For experienced Data Scientist, you might be already familiar with some or most of the below process.

So far in my career I spoke to many data scientist, and in most of the cases, when asked how they will approach a problem, I received a common answer: We will build a model (use XGBoost or CatBoost) and implement the solution. But believe me, that is not the case. Data Science is not just about building model and implementing it. There are lot of nuances, that a Data Scientist, is expected to design and develop in the process. This post is written based on my 14+ years of practical experience in the industry.

Before jumping into the details, let me explain, what I think, is the role of a Data Scientist. As per me, a Data Scientist is expected to know the business acumen for which the solution is getting developed. They should be aware of the data sources and availability of data, quality of the data and meaning of each fields in the data. A Data Scientist is expected to design a feasible and reliable ML solution which can be implemented and will be beneficial to the company or the audience.

What we are going to cover

- Scoping and formulation

- Data aspects

- Technique selection and model development

- Reporting and explanation

- Implementation

- Model maintenance

Scoping and formulation

This is the first and (probably) the most important step in the entire process. A proper scoping of the problem will not only help in the subsequent steps, but also sets the right expectation of the stakeholders. Formulation of the problem correctly, on the other hand, helps in getting a clear direction and working towards the goal which will have clear business impact. Let us try to understand how can we scope and formulate a problem.

To define the scope, it is important to understand few key important aspects:

- what question(s) that the stakeholders wants to answer?

- what is the product and how it operate?

- what data points are available to address the question?

- what is the intended use of the model? Will it be an online (real time) or an offline (batch scoring) model?

Once we have answers to these questions, formulating the problem becomes easier. But how is formulating different from scoping? Formulating a problem is converting a business ask into a data driven insight generation solution, which may or may not need Machine Learning. While formulating the problem, the question that needs to be answered will be:

- What kind of solution is required?

- How will the solution be developed? What will be the role of machine learning in the process?

- What data is captured and available for the exercise?

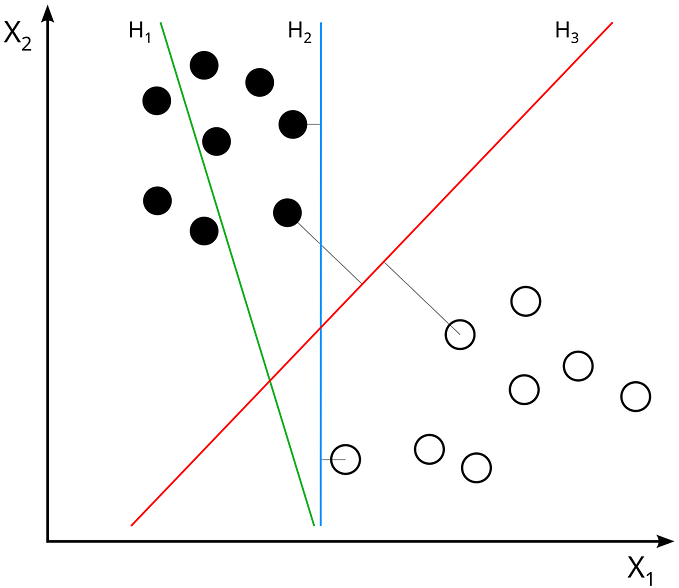

- What kind of machine learning algorithm (supervised or unsupervised) do we need to apply to generate the insights? If supervised ML, then what will the problem look like: classification or regression?

- How should the dependent/outcome variable be defined?

- What will the final solution look like?

- How will the score be generated and consumed by the system?

Once answers to these questions are in place, combining them will help the Data Scientist build the formulation. The other next steps in the journey will depend on the formulation of the problem.

Data aspects

Data is the most important component for building a successful Machine Learning model. Feeding the correct data to the algorithm is critical, otherwise it will be garbage-in garbage-out. Hence, it is the responsibility of a Data Scientist to ensure the data is of high quality, all required data is available, and understand each and every attribute present in the data. More details on why understanding every attribute in the data is important will be provided in the subsequent section “Reporting and Explanation”.

While validation of the data is critical, at the same time understanding data availability is very important. For example, while developing a model, features from a data is used which gets updated every 7 days — but the model generates a batch score every day at night. In such scenarios, the implementation of the model will fail as during the scoring process, the latest data will not be available for the model to consider.

Timeline definition is another key factor to consider. Questions like:

- Data from which time period should be considered?

- Was there a paradigm shift in the business — in such scenarios, data for the period which represent current scenario should be considered.

- What should be the in-time data vs out-of-time data?

should be answered while building the data. If one need to estimate, 60% of the time needs to be spend here. Proper validation and quality checks needs to be done to avoid any repetitive work and hence delay in delivery.

Technique selection and Model development

Next in the stack comes selecting the proper selection of modeling technique. Based on the formulation above, now is the time to select the correct modeling technique. While selecting the technique, few important questions should be answered:

- Will the technique help in answering the question?

- What will be the complexity of the technique?

- What will be the resource requirement?

- Will the results obtained from the model justify the cost of implementing and running it?

- What will be the estimated scoring time?

Once the model family is selected, next comes development, training and training and more training. Remember to do validation of the data simultaneously after each training ensuring that the progress is made in the right direction.

Reporting and explanation

After finishing the model development and validation, next step will be to report the performance of the model. While reporting, make sure all metrices are reported based on new validation (unseen) data and not training data or test data which was used while validating during development process.

Based on the business requirement and model usage, different analysis of the score might need to be reported — like histogram of the score for each cell of the confusion matrix, or AUC chart, etc.

Explanability is another important aspect of reporting. A common question that stakeholders want to understand is what are the key driving factors, why is the model predicting a given outcome, what are other important factors, are the directional impact inline with business justification, and many other questions.

Implementation

It is the job of the Data Scientist to develop the implementation code — replicating the data extraction, pre-processing, feature generation and finally score generation by the model. In complex systems, Data Scientist should work hand-in-hand with engineering team ensuring the implementation is done exactly the way it should be.

Model maintenance

So the model development and implementation is done, huh! That should be the end of the project. Wait, no! The role of a Data Scientist is not yet done. A proper charter needs to be built and required mechanism should be developed so that the model get updated or re-trained with minimum intervention.

Now it is done.

Conclusion

A role of data scientist is not just developing a Machine Learning model — it is much more than that. A proper data scientist needs to do scoping, formulation, data extraction, quality check on the data, model development, reporting performance, implementation and maintenance of model.

That will be all on this topic. Hope you now have a better idea on how to approach a Machine Learning solution and what all different aspects you need to take care for building a successful solution.

If you like it, do follow me for more such posts ☺.